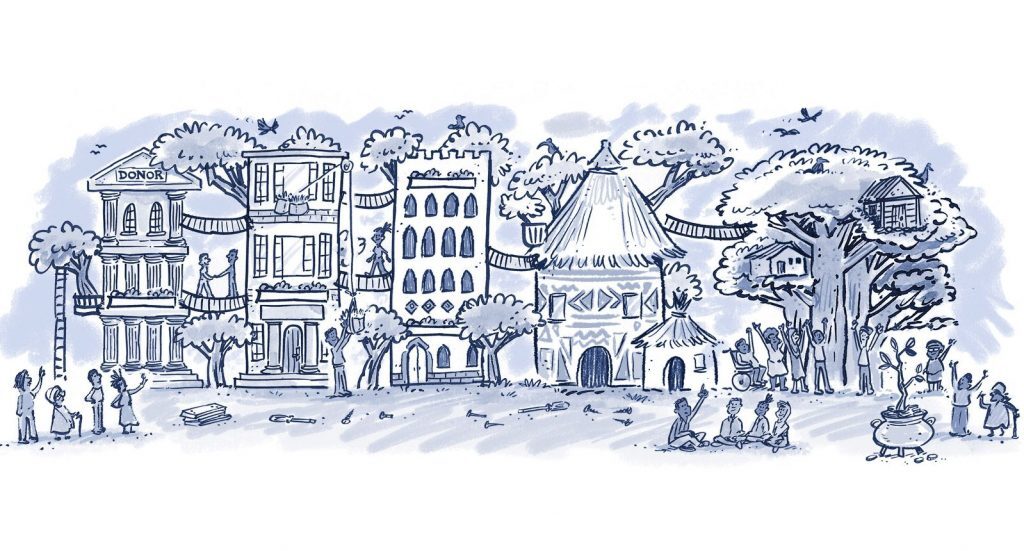

What do we mean by community philanthropy?

Community philanthropy builds on assets that already exist within communities rather than depending only on what comes from outside. When local resources are brought to the table, a flatter power dynamic is created and traditional donor-beneficiary imbalances are challenged.